Microservices have redefined how developers design, build and deploy mission-critical production software. Therefore, it’s essential to understand what microservices are, what they mean for developers, and the benefits and challenges it creates for software teams.

What are Microservices?

Microservices are a software architecture pattern that structures an application as a collection of small, independent, and loosely coupled services.

As shown above, the application consists of three primary services:

- Account service

- Inventory service

- Shipping service

Each service is maintained by an independent team and is deployed in an independent environment. Therefore, each service operates as its own entity that focuses on specific business functions.

But, each service is not enough for the entire app to function. Therefore, these services communicate with other microservices through well-defined APIs to fulfill a client request.

Unlike monolithic architectures, microservices allow for modular development, deployment, and scalability, providing flexibility and adaptability to changing requirements.

Microservices vs. Monoliths

When building software, one of the critical aspects you must consider is if you will use a monolith or a microservices-based architecture to structure your app.

What are Monolith Applications?

A monolithic architecture encourages developers to build an application as a single entity. This means the entire application runs on one ecosystem, with no distributed parts whatsoever. This approach results in deploying the application as one artifact, which means updates will need redeploying of all aspects of functionality.

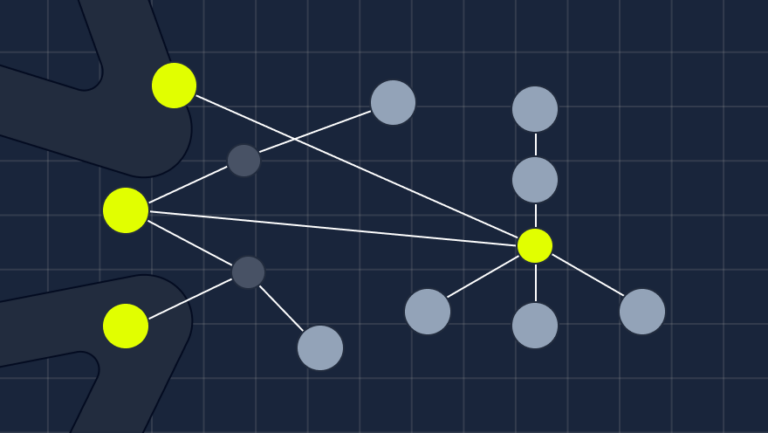

Figure: A sample monolith

In the diagram above, the system communicates through one Business Layer and one database to process a request. This means that changing one part of your app requires you to redeploy your app. This also means that scaling only a part of your app is impossible. Either you scale the entire app or don’t scale at all.

What are Microservices Applications?

On the other end of the spectrum, you have microservices. Microservices break down an app into smaller services that function independently. This means that developers can scale parts of the application at a given time and only deploy the required part rather than deploying the whole app.

Benefits

Microservices offer numerous benefits:

- Service Independence: Since each service is developed and deployed independently, scaling specific parts of an application with no overhead becomes easy. For example, a movie ticketing software built using microservices might scale its booking service up when a new movie is out while keeping the notification service.

- Domain-Driven Design: With microservices, developers are focused on understanding the business domain first. After that, developing a microservice as a domain entity that will ultimately help reflect a microservice is easier.

- Differentiating Technology Stack: When independent teams build and maintain a service, they no longer have to use one language to make the entire application. Instead, different teams can use other programming languages that best suit the task and use a well-defined API to improve communication between these services.

Challenges of microservices

However, adopting microservices is not free of challenges. Like all architectures, microservices, too, have their tradeoffs.

- Difficulty in Observability: With microservices, teams introduce many moving parts onto an application that’s often distributed in nature. And because of this, it’s hard to get a high-level view of the entire application at a glance. Therefore, monitoring and observing a microservice-based application becomes increasingly difficult. Most organizations will adopt monitoring and observability tools very early on.

- Added Latency: With more moving parts, more requests are made within your application. This means there is more latency in a single request as a service must communicate with several other services to compile a response. This often increases the round trip time and can often slow down an application.

The importance of observability

Observability refers to the ability to gain insights and monitor the behavior and performance of individual microservices and the entire distributed system. This includes collecting, analyzing, and visualizing data from various sources such as logs, metrics, traces, and events. By implementing effective observability practices, developers and operations teams can quickly identify and resolve issues, understand service dependencies, and optimize the system’s performance. It plays a crucial role in maintaining system reliability, identifying bottlenecks, detecting errors, and ensuring that microservices work harmoniously together. This allows organizations to deliver more reliable and efficient software solutions.

Microservices observability tools

There are several tools for microservices observability. They typically fall under the following categories:

- Application Performance Monitoring (APM)– APMs are a strong and well-established category that monitors production. Geared at helping DevOps and SREs, APMs ensure system availability and optimize the service performance of the production environment, to ensure the organization meets its SLAs. Specifically, this is done by collecting data from production and alerting about discrepancies.

- Open-Source –OpenTelemetry (OTel), an open source project from the Cloud Native Computing Foundation, which standardized the distributed tracing method, has been growing in popularity and adoption. The OTel framework provides SDKs, APIs and tools for collecting and correlating telemetry data (logs, traces, and metrics) from various interactions (APIs, messaging frameworks, DB queries, and more) in cloud-native distributed systems.

Distributed tracing data collected by OTel can be used for identifying errors, issues, and bottlenecks in microservices and distributed architectures. To do so, the data needs to be exported to external solutions, either open source or commercial.

Some of the most popular open source solutions are Jaeger and Zipkin. Jaeger, for example, enables users to query OTel data and view the results in the Jaeger UI. It provides basic search and filtering capabilities, like filtering for spans by their services, operations, attributes, timeframe and duration. - Next Gen Distributed Tracing Observability – These tools make distributed tracing accessible and actionable for developers. Such tools, like Helios, provide insights into which actions triggered which results, which service correlations exist, and what the impact of a code change is on architecture components.

Are microservices the right choice for your organization?

To determine if a microservices architecture is right for you, there are a few questions you need to ask yourself:

- Are scalability and flexibility important in your organization?

- Is your dev team familiar with the microservices paradigm?

- How easily can you separate your app into small functioning components?

- Can your team easily be broken up into ‘squads’ that can independently own each functioning component?

Best Practices for adopting microservices

If you’ve decided to build microservices apps, it is strongly recommended to consider these best practices::

- Single Database Per Service: Like services, it’s essential to maintain one database per service when building microservices. This is heavily done to avoid database bottlenecks. Teams can independently scale their database throughput up and down based on the expected workload for each service. Not only that, but this encourages teams to pick a database that’s best suited for their domain.

- Using API Gateways for Service Communication: An API gateway provides a single entry point for clients to communicate with microservices. Thus, it allows developers to integrate authentication, routing, load balancing, and service discovery, simplifying the interaction between services and external clients.

- Integrate Observability: Integrating observability early on in your microservices-based application lets you gain insights into the behavior and performance of the system as a whole and its services. It enables teams to collect and analyze data from various sources, such as logs, metrics, and traces, to understand the system’s internal state and identify issues or potential bottlenecks.